[ad_1]

Testing Intelligent Virtual Assistants (IVA’s) to iterate and improve the NLU model is a critical step in the virtual assistant lifecycle. Before you put your virtual assistant into production, before people actually use it, you need to make sure it works. It’s pretty simple, but testing is very important.

There are many ways you can go about your testing, because ultimately, when you go into production, you want to deliver an experience that people love and enjoy using.

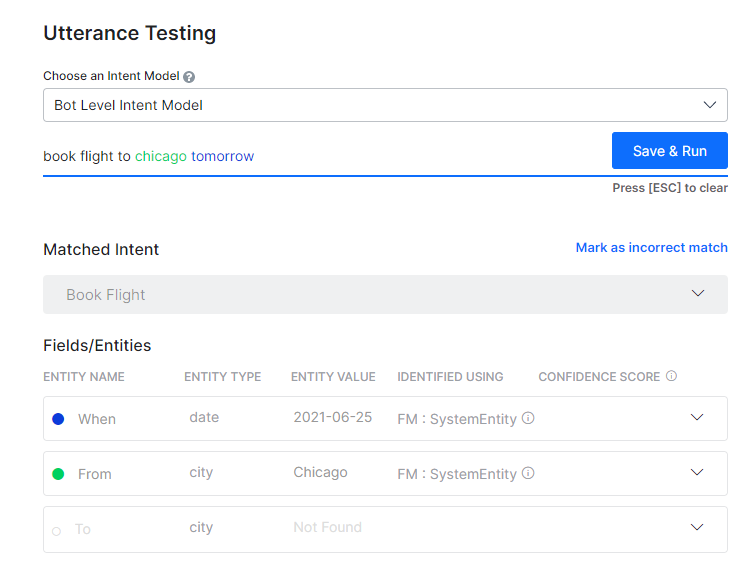

Step one – pronunciation test

The first step is to test the pronunciation. Testing the pronunciation involves finding out whether the virtual assistant understands and recognizes the main purpose of the speech. An utterance is what the user tells the AI bot through writing or speaking.

Is the bot capturing the correct items? Does it do what you want for this one expression? This is the bare bones minimum.

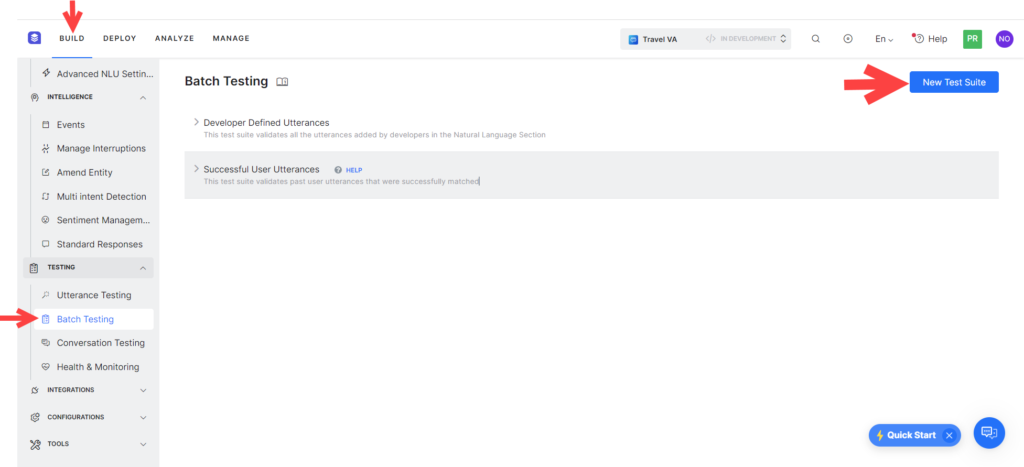

Step two – group testing

The second step in testing your virtual assistant is batch testing, or collecting a significant number of test cases. Machine learning models (MLM) are typically trained on hundreds or thousands of different expressions. What you’ll want to do over time is build your test suite so you can run batch tests where you have dozens, hundreds, or thousands of different ways that someone can ask a question to the virtual assistant. Series testing checks if there are any gaps in understanding the virtual assistant.

Once you identify the gaps, you can go ahead and make sure that training data is added to train your virtual assistant so that it becomes smarter over time. This will be explored in another blog.

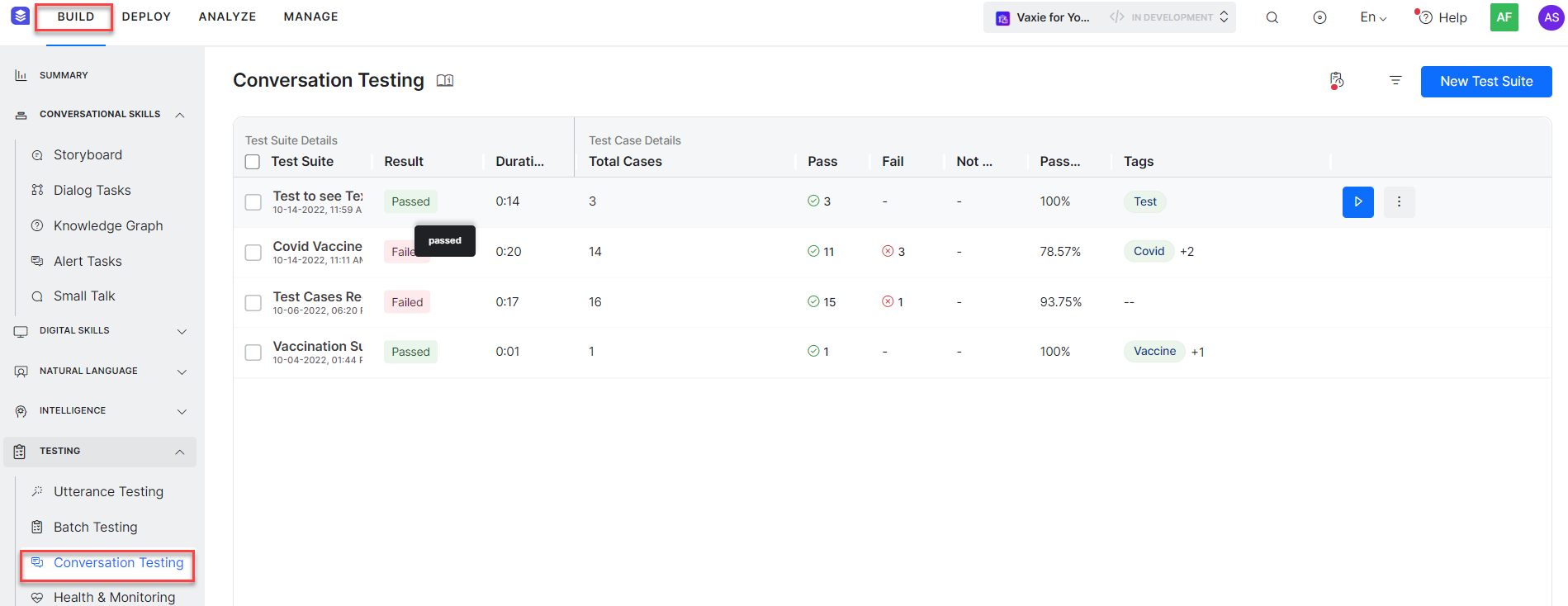

The next step is to test the conversation.

Step three – testing the conversation

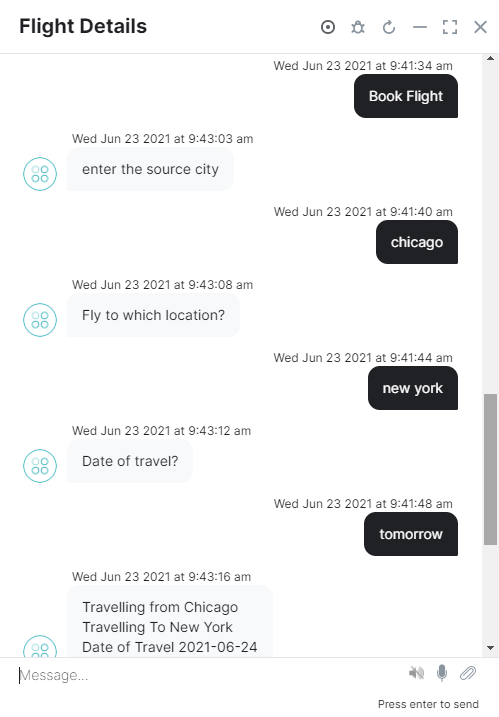

Conversation testing involves selecting the channel on which you are going to interact with the virtual assistant and testing the entire conversation experience. This could be a chat, voice channel, mobile app or home speaker experience. Whichever channel it is, you’ll be watching the entire conversation flow you’ll have with this virtual assistant.

So speaking testing focuses on the whole speaking experience. How does interacting with this virtual assistant look and feel? Is it a good experience or needs improvement?

Step four – testing with pilot programs

Finally, one of the best ways to test your virtual assistant is through pilot programs. An example of a pilot program would be to launch your virtual assistant by giving 50 or 100 people access to it. Call it a focus group. Maybe they’re friends and family, maybe it’s everyone you know. The testing cohort can be your company’s employees. You pick a small number of users and give them access to it and then let them run with it.

After people use the virtual assistant, you’re going to collect data to understand where it’s working well and where it’s not; What does this common experience look like? You can then take that data and use it to improve your virtual assistant over time.

By using this combination to test our intelligent virtual assistants, we’ll make sure it delivers this phenomenal experience once you hit production.

Ready to test your own bots and want a detailed step-by-step guide? Check out our bot documentation on testing and debugging for a complete, in-depth testing process.

Want to learn more?

We are here to support your learning journey. Ready to start building a bot but don’t know where to start? Learn conversational AI skills and get certified on the Kore.ai Experience Optimization (XO) platform.

As a leader in conversational AI platforms and solutions, Kore.ai helps enterprises automate front- and back-office business interactions to deliver exceptional experiences for their customers, agents and employees.

Why not try our pre-built virtual assistant? Start automating self-service through voice and digital channels for both your customers and employees today.

[ad_2]

Source link

.png#keepProtocol)