[ad_1]

Building a large high-quality corpus for natural language processing (NLP) is not reasonable. Textual data can be large, cumbersome, and unwieldy, and unlike pure numbers or categorical data in rows and columns, differences between documents can be difficult. In organizations where documents are shared, changed, and shared again before being archived, the problem of duplication can become overwhelming.

To find exact duplicates, matching all string pairs is the simplest approach, but it is not a very efficient or sufficient technique. Using MD5 or SHA-1 hash algorithms can get the correct result at a faster speed, but almost duplicates will still be off the radar. Text similarity is useful for finding similar files. There are different approaches to this, and each of them has its own way of defining documents that are considered duplicates. In addition, the definition of duplicate documents affects the type of processing and the results obtained. Below are some options.

Using SAS Visual Text Analytics, you can configure and perform this task during your corpus analysis journey with the Python SWAT package or PROC SQL in SAS.

Work with Python SWAT

The Python SWAT package provides a Python interface to SAS Cloud Analytic Services (CAS). In this article, we’ll call the profileText action, drop the output tables, and perform duplicate detection in Python.

Prepare the data

The corpus we are going to study is the second release of the American National Corpus (ANC2). It is also one of the reference corpus of profile text action. The corpus contains over 22,000,000 words of written and spoken texts and includes both annotated data and their plain text.

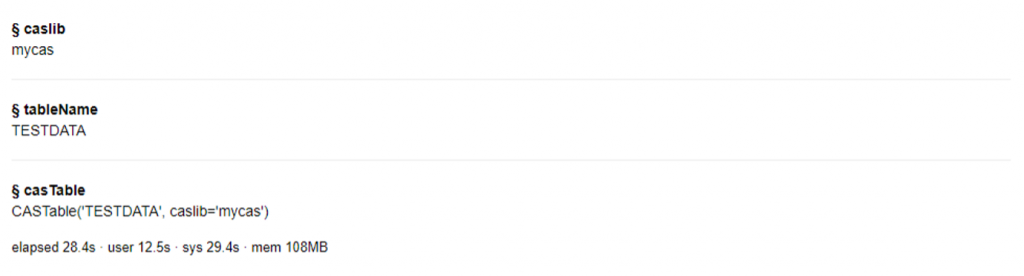

We put all 13295 plain text files under /home/anc2. After connecting to the CAS server, we create a table TESTDATA with ANC2 data.

# Import libraries import swat from collections import Counter import pandas as pd import itertools import random # Connect to CAS server s = swat.CAS("cloud.example.com", 5570) # Add the caslib mycas with the path to corpus directory s.addCaslib(caslib='mycas', datasource="srcType":"path", session=False, path="/home", subdirectories="yes") # Load txt files under anc/ to the CASTable testdata s.loadTable(casout="name":"TESTDATA", "replace":True, caslib="mycas", importOptions="fileType":"Document", path="anc2") |

outside:

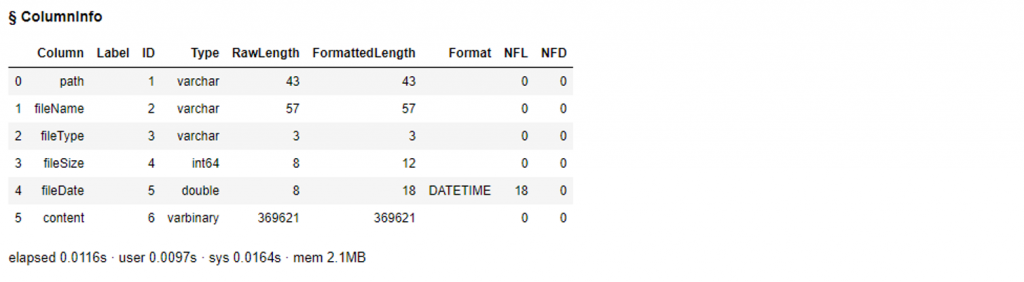

We can easily check the table using, for example, columnInfo() or head().

# View column summary for testdata anc2 = s.CASTable("TESTDATA", replace=True) anc2.columninfo() |

outside:

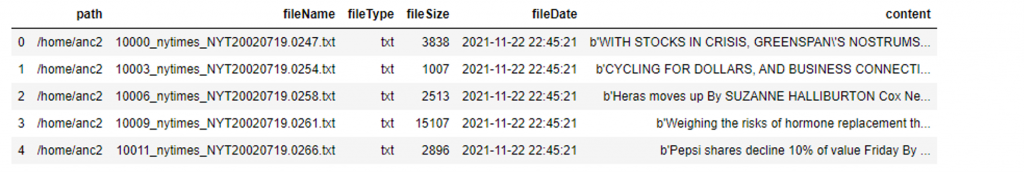

# Check on the first five rows anc2.head() |

outside:

Fix the data

We load a set of text handling actions and call the profileText action to profile the ANC2 data. The casOut parameter is required to run the action. This output table contains information complexity, information density, and vocabulary diversity statistics. For duplicate identification we need results from two output tables, documentOut and intermediateOut. A CASTable can be converted to a SASDataFrame using the CASTable.to_frame() method. This method helps to bring down all the data for further investigation.

# Load the action set textManagement s.loadactionset('textManagement') # Call the action profileText results = s.profileText(table=dict(caslib="mycas", name="testdata"), documentid="fileName", text="content", language="english", casOut=dict(name="casOut", replace=True), documentOut=dict(name="docOut", replace=True), intermediateOut=dict(name="interOut", replace=True)) |

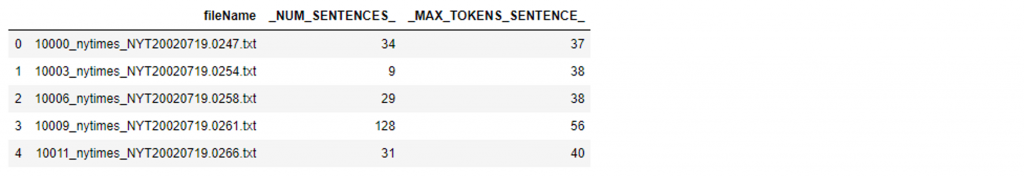

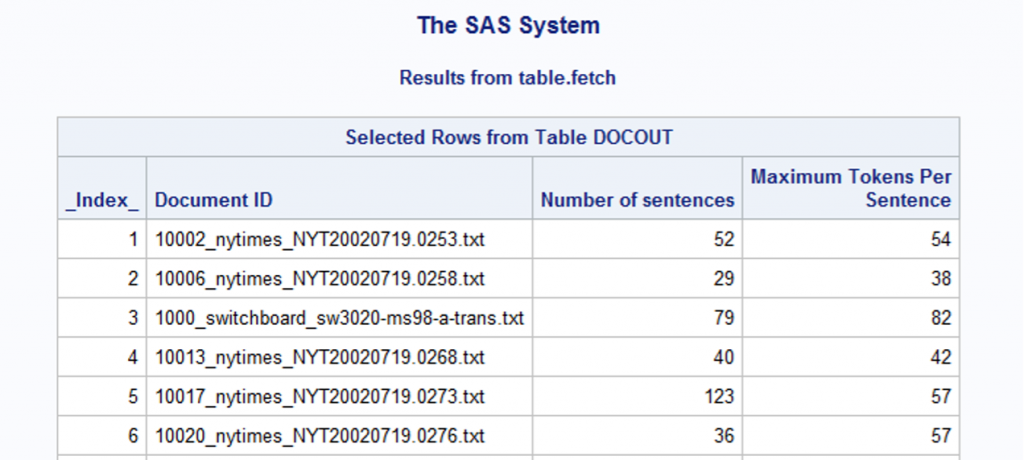

documentOut contains document-level information complexity statistics. For each file, we know their total number of sentences and the maximum number of characters in those sentences.

# Convert the CASTable docOut to SASDataFrame df_docout = s.CASTable('docOut').to_frame() df_docout.head() |

outside:

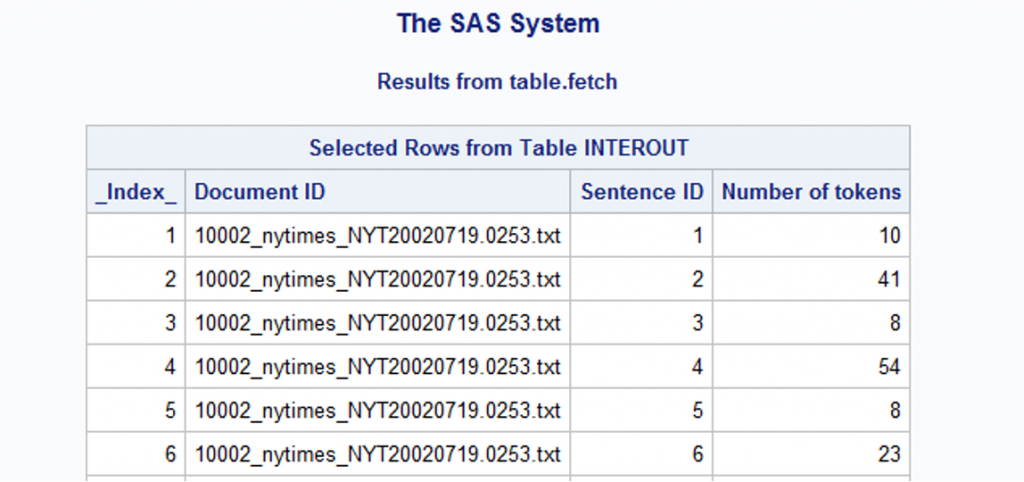

The other output, intermediateOut, contains the token count of each sentence in each document.

# Convert the CASTable interOut to SASDataFrame df_interout = s.CASTable('interOut').to_frame() df_interout.head() |

outside:

Filter the data

Our goal is to find identical documents and documents that are not identical but similar in substance. To narrow down our search results for good candidates, we make the assumption that if two files have the same number of sentences and maximum number of sentence marks, they have a higher chance of being duplicates or near-duplicates.

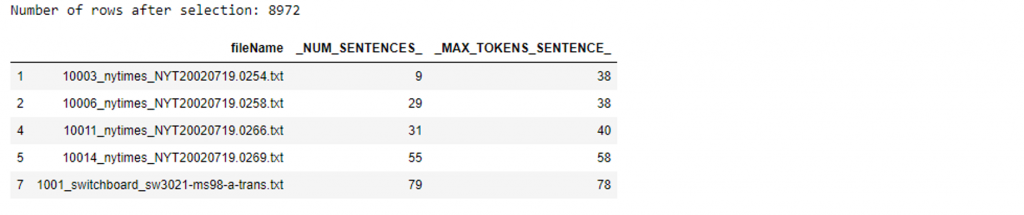

Under this assumption, we store documents with their value pair _NUM_SENTENCES_ and _MAX_TOKENS_SENTENCES_ occurring more than once, leaving 8972 of the 13295 files.

# Filter out docs with their column values appearing more than once df_docout_selected = df_docout[df_docout.groupby(['_NUM_SENTENCES_','_MAX_TOKENS_SENTENCE_']) ['_NUM_SENTENCES_'].transform('size')>1] print(f"Number of rows after selection: len(df_docout_selected)") df_docout_selected.head() |

outside:

You can narrow the results even further if you focus your search by specifying conditions such as selecting only documents with a total number of sentences greater than 200, or selecting the maximum number of characters in sentences greater than 80.

# (Optional) Reduce search results by filtering out docs by condition df_docout_selected=df_docout_selected[df_docout_selected._NUM_SENTENCES_>200] df_docout_selected=df_docout_selected[df_docout_selected._MAX_TOKENS_SENTENCE_>80] |

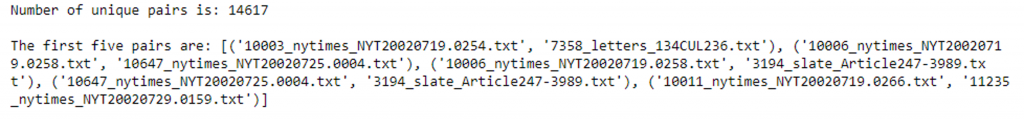

Next, we prepare pairs of file combinations that share the _NUM_SENTENCES_ and _MAX_TOKENS_SENTENCES_ values. Note that sometimes more than 2 files share the same values. The total number of unique pairs is 14617.

# Keep only the interout data for files that are selected search_dict = df_docout_selected.set_index('fileName').T.to_dict('list') df_interout_selected = df_interout[df_interout['fileName'].isin(search_dict.keys())] # Get all unique combinations of every two docs check_tmp_dict = Counter([tuple(s) for s in search_dict.values()]) file_pair_lst = [] for c in check_tmp_dict: file_pair = [k for k,v in search_dict.items() if tuple(v)==c] if len(file_pair) == 2: file_pair_lst.append(tuple(file_pair)) else: pair_lst = list(itertools.combinations(file_pair, 2)) file_pair_lst += pair_lst print(f"Number of unique pairs is: len(file_pair_lst)\n") print(f"The first five pairs are: file_pair_lst[:5]") |

outside:

Compare the data

Finding text near duplicates is more difficult than duplicates. There is no gold standard for the similarity threshold of two near-duplicates. Based on the _NUM_TOKENS_ of _SENTENCE_ID_ from the interOut table earlier, we add an assumption that two documents have a very high chance of being near-duplicates if they share the same number of tokens for the list-ordered sentences, with their indices randomly chosen. Determined ratio to the total number of proposals.

For example, fileA and fileB are 20 sentences each and the specified ratio is 0.5. We use pandas.Series.sample to randomly select 10 sentences from each of the two files. The random_state value is required to ensure that sentences from the two files are taken in parallel. If two sentences have the same number of tokens for each pair we select, fileA and fileB are considered near-duplicates.

Now we are ready for the comparison.

# Compare doc pairs possibleDuplicate = [] for (a, b) in file_pair_lst: # Keep only the column _NUM_TOKENS_ tmp_a = df_interout_selected[df_interout_selected['fileName']==a].loc[:,"_NUM_TOKENS_"] tmp_b = df_interout_selected[df_interout_selected['fileName']==b].loc[:,"_NUM_TOKENS_"] # Drop the index column to use pandas.Series.compare tmp_a.reset_index(drop=True, inplace=True) tmp_b.reset_index(drop=True, inplace=True) # Select sentences by pandas.Series.sample with the defined ratio num_sent, num_sent_tocheck = len(tmp_a), round(ratio_tocheck*len(tmp_a)) tmp_a = tmp_a.sample(num_sent_tocheck, random_state=1) tmp_b = tmp_b.sample(num_sent_tocheck, random_state=1) # Detect duplicates by checking whether it is an empty dataframe (with a shape of (0,2)) if tmp_a.compare(tmp_b).shape != (0,2): pass else: possibleDuplicate.append([a, b]) |

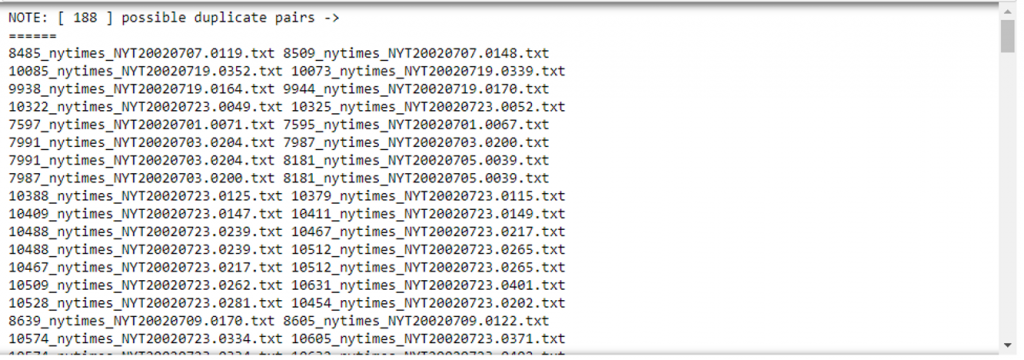

The possible duplicate list contains 188 pairs of filenames.

# View the result view = '======\n'+'\n'.join([" ".join(p) for p in possibleDuplicate])+'\n======' print(f"NOTE: [ len(possibleDuplicate) ] possible duplicate pairs -> \nview") |

outside:

Check the results

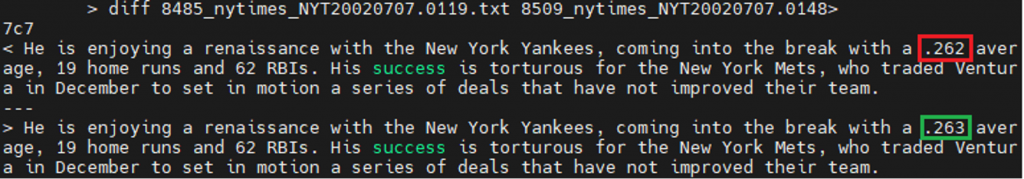

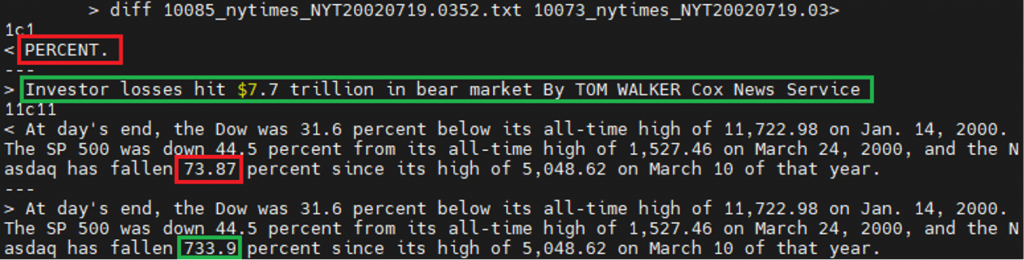

Now it’s time to see how far we’ve come in our duplicate search. By examining the contents of each pair, it is not difficult to find 133 duplicates and 55 near duplicates. Let’s look at the two almost duplicate pairs we can find. These documents have about 50 sentences and the differences are only between 2 sentences.

Working with PROC SQL in SAS

SQL is one of the many languages built into the SAS system. Using PROC SQL, you have access to a powerful tool for manipulating and querying data.

Prepare the data

We load the folder /home/anc2 with all the plain text files in the TESTDATA table.

libname mycas cas; proc cas; table.addcaslib / caslib = "mycas" datasource = srctype="path" session = False path = "/home" subdirectories = "yes"; run; table.loadTable / casout = name="testdata", replace=True caslib = "mycas" importOptions = fileType="DOCUMENT" path = "anc2"; run; quit; |

You can load them directly if you have already saved them in a .sashdata file.

proc cas; table.save / table = name="testdata" caslib = "mycas" name = "ANC2.sashdat"; run; table.loadtable / casout = name="testdata", replace=true path = "ANC2.sashdat" caslib = "mycas"; run; quit; |

Fix the data

We call the profileText action in the textManagement action set to profile the data.

proc cas; textManagement.profiletext / table = name="testdata" documentid = "fileName" text = "content" language = "english" casOut = name="casOut", replace=True documentOut = name="docOut", replace=True intermediateOut = name="interOut", replace=True; run; table.fetch / table = name="docOut"; run; table.fetch / table = name="interOut"; run; quit; |

Filter the data

We store documents taking into account that their value pairs occur more than once.

proc sql; create table search1 as select * from mycas.docout group by _NUM_SENTENCES_, _MAX_TOKENS_SENTENCE_ having count(*) > 1; quit; |

We generate all pairs of file combinations that share the same values.

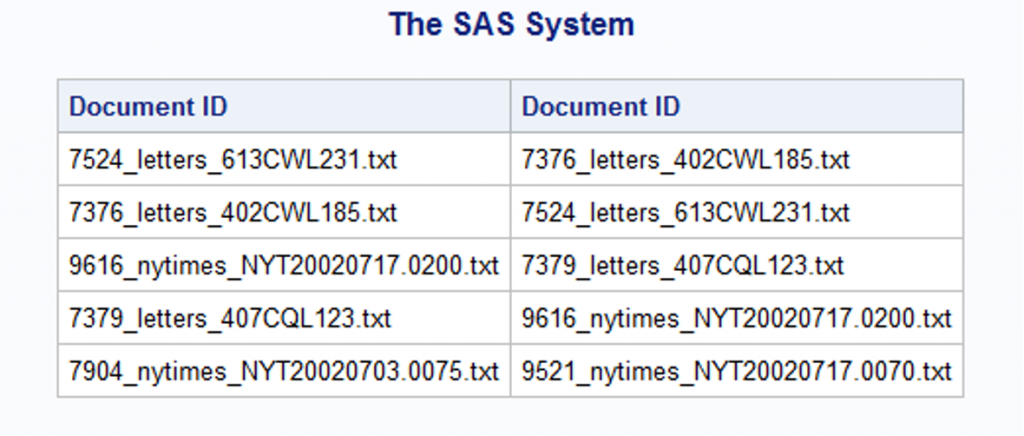

proc sql; create table search2 as select a.fileName as fileA , b.fileName as fileB from (select * from search1 ) a cross join (select * from search1 ) b where a._NUM_SENTENCES_ = b._NUM_SENTENCES_ and a._MAX_TOKENS_SENTENCE_ = b._MAX_TOKENS_SENTENCE_ and a.fileName <> b.fileName; quit; proc print data=search2(obs=5); run; |

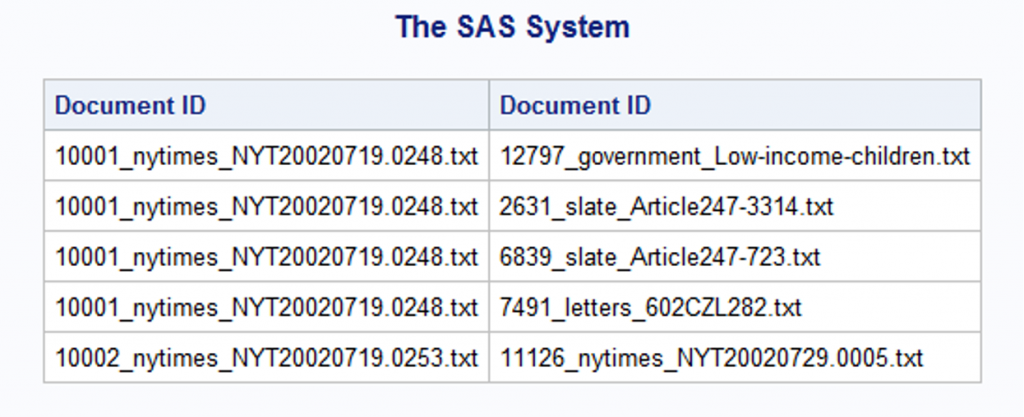

Looking at table lookup 2, we notice that it would be better to get only unique pairs to avoid comparing the same file names.

proc sql; create table search3 as select distinct fileA, fileB from search2 where search2.fileA < search2.fileB; quit; proc print data=search3(obs=5); run; |

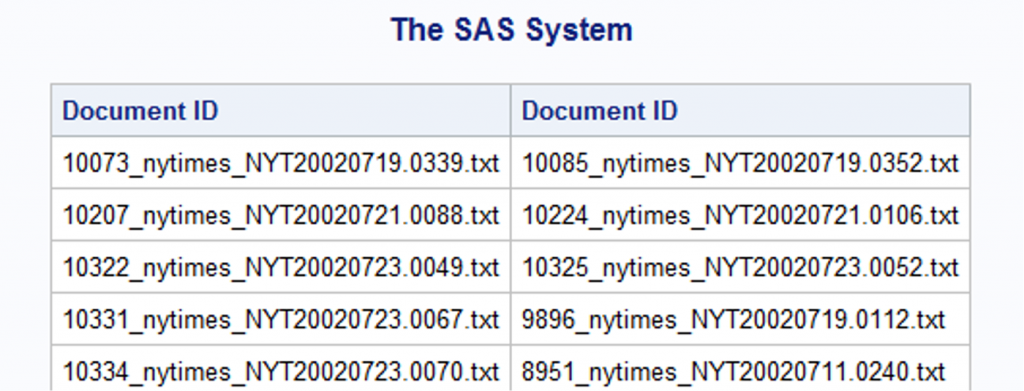

Compare the data

Given the assumption that two documents have a very high chance of being near-duplicates if they share the same number of tokens for list-ordered sentences, their indexes are randomly selected in a defined ratio to the total number of sentences. Here we use the rand(‘uniform’) function to generate observations from a continuous uniform distribution in the interval (0,1) by default. Setting it to “between .2 and .7” helps us get 50% of the sentences by chance. The similarity threshold can be adjusted by changing the range, say “where rand(‘uniform’) between .2 and .9”, meaning that 70% of the sentences in the documents are considered.

proc sql; create table search4 as select fileA as f1, fileB as f2 from search3 where not exists ( select * from ( select tmp1A, tmp2A from ( select tmp1._NUM_TOKENS_ as tmp1A, tmp1._SENTENCE_ID_ as tmp1B, tmp2._NUM_TOKENS_ as tmp2A, tmp2._SENTENCE_ID_ as tmp2B from (select * from sasout1.interout interout1 where interout1.fileName = f1) tmp1, (select * from sasout1.interout interout2 where interout2.fileName = f2) tmp2 where tmp1B = tmp2B) where rand('uniform') between .2 and .7) where tmp1A <> tmp2A); quit; |

Check the results

We use table test data to verify the results. Out of 172 pairs, 133 are duplicates and 39 are near duplicates.

proc sql; create table Duplicates as select f1, f2 from search4 where not exists ( (select content from mycas.testdata tmp where tmp.fileName = f1) except (select content from mycas.testdata tmp where tmp.fileName = f2) ); quit; proc sql; create table nearDuplicates as select f1, f2 from search4 where exists ( (select content from mycas.testdata tmp where tmp.fileName = f1) except (select content from mycas.testdata tmp where tmp.fileName = f2) ); quit; |

conclusions

Examining the statistics derived from the performance of profile text provides a practical perspective to gain insight not only through comparison with a reference corpus, but also at the token, sentence, and document levels within the corpus itself. By randomly selecting which sentences to compare, we may observe different results after performing this duplicate identification method. The smaller the ratio, the more duplicate pairs we get. And you might be surprised to know that if we set the ratio to 0.1, the result would still be about 207 pairs, just slightly more than the 172 pairs when the ratio is set to 0.5. The method doesn’t seem to work redundantly because the two files must have the same number of sentences and the same maximum number of characters before we pair them. This requirement gives us a safer place to start our search.

Identifying textual near-duplicates is easy to understand, but it is not so easy to develop standards to contain all types of duplicates. In this article, we propose one way of describing near duplicates, in which the distance is between several sentences or words in order, but does not include such cases as the sentences of two documents are not arranged in the same order, or some particles are combined in such a way that the results are affected by different indexing of sentences . They’re fun to think about, and they can turn into next-level discoveries.

How would you determine similarity near duplicates?

Learn more

[ad_2]

Source link