[ad_1]

You can now register machine learning (ML) models built in Amazon SageMaker Canvas with one click to the Amazon SageMaker Model Registry, allowing you to deploy ML models in production. Canvas is a visual interface that allows business analysts to create accurate ML predictions on their own, without requiring any ML experience or writing a single line of code. While this is a great place for development and experimentation, to derive value from these models, you need to operationalize them, namely deploy them in a production environment where they can be used to make predictions or make decisions. Now with integration with the model registry, you can store all model artifacts, including metadata and performance metrics, in a central repository and integrate them into your existing model deployment CI/CD processes.

A model registry is a repository that collects ML models, manages different model versions, associates metadata (such as learning metrics) with the model, manages model approval status, and deploys them to production. After creating a version of a model, you typically want to evaluate its performance before deploying it to a production endpoint. If it meets your requirements, you can update the approval status of the model version before approval. Setting the status to Approval can cause CI/CD to be implemented for the model. If the model version does not meet your requirements, you can update the approval status in the registry to negative, which prevents the model from being distributed in an escalated environment.

The model registry plays a key role in the model deployment process because it encapsulates all model information and enables automation of model promotion in production environments. Below are some of the ways a model registry can help ML models work:

- version control – A model registry allows you to keep track of different versions of your ML models, which is essential when deploying models to production. By tracking model versions, you can easily revert to a previous version if a new version causes problems.

- Cooperation – A model registry enables collaboration between data scientists, engineers, and other stakeholders by providing a centralized location to store, share, and access models. This can help streamline the deployment process and ensure that everyone is working on the same model.

- Governance – A model registry can help with compliance and governance by providing an audit trail of model changes and deployments.

Overall, a model registry can help streamline the process of deploying ML models to production by providing version control, collaboration, monitoring, and governance.

Solution overview

In our use case, we assume the role of a business user in the marketing department of a mobile phone operator, and we have successfully built an ML model in Canvas to identify customers at potential risk of churn. Thanks to the predictions generated by our model, we now want to move this from our development environment to production. However, before our model is deployed to a production endpoint, it must be reviewed and approved by the central MLOps team. This team is responsible for managing model versions, reviewing all associated metadata (such as training metrics) with the model, managing the approval status of all ML models, deploying approved models to production, and automating the model with CI/CD. To simplify the process of deploying our model to production, we use Canvas’ integration with the model registry and register our model for review by our MLOps team.

The workflow steps are as follows:

- Upload a new data set along with the current customer population to Canvas. For a complete list of supported data sources, see Import data into Canvas.

- Build ML models and analyze their performance metrics. See instructions for creating a custom ML model in Canvas and evaluate the model’s performance.

- Register best performance versions in the model registry for review and approval.

- Deploy an approved model version to a production endpoint for real-time inference.

You can perform steps 1–3 in Canvas without writing a single line of code.

prerequisites

For this walkthrough, make sure the following prerequisites are met:

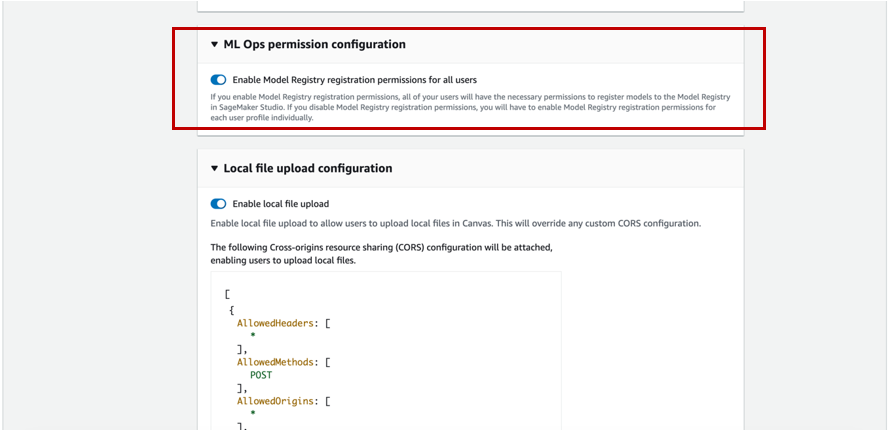

- To register model versions in the model registry, the Canvas administrator must grant the necessary permissions to the Canvas user, which you can manage in the SageMaker domain that hosts your Canvas application. For more information, see the Amazon SageMaker Developer Guide. When assigning permissions to your Canvas user, you must choose whether to allow the user to register versions of their model in the same AWS account.

- Implement prerequisites specified in customer forecasting without machine learning using Amazon SageMaker Canvas.

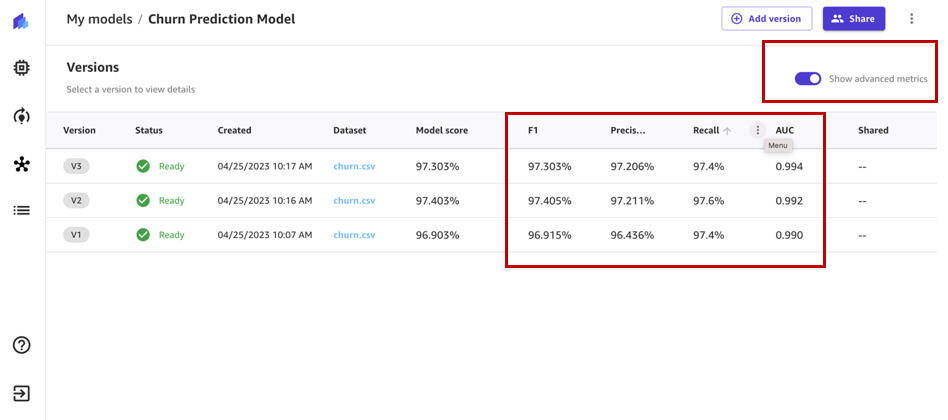

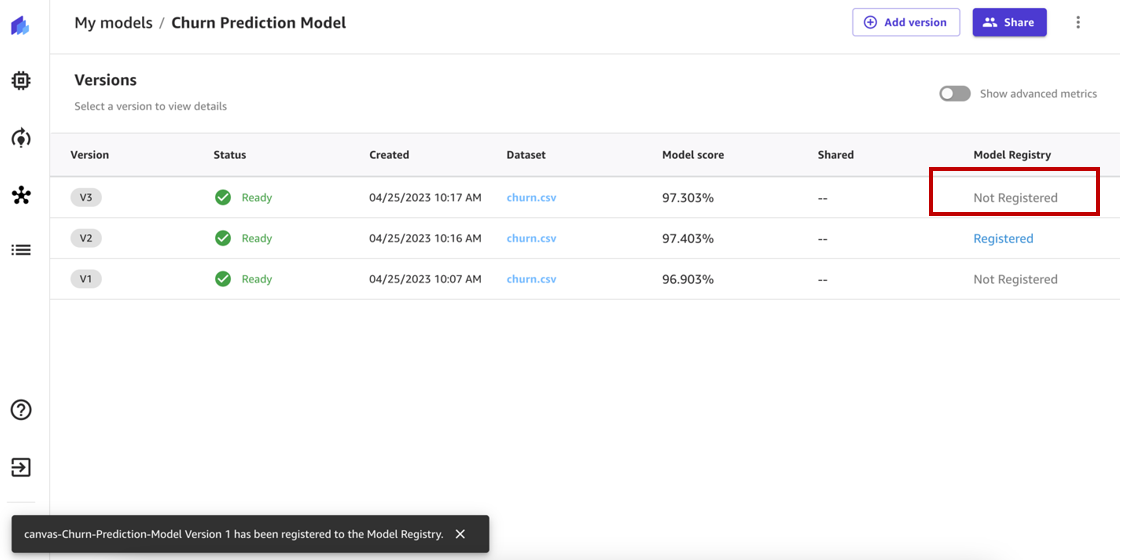

You should now have three versions of models trained on historical overheating forecast data in Canvas:

- V1 trained with all 21 features and the fast build configuration with a model score of 96.903%

- V2 was trained with all 19 features (phone and state features removed) and fast construction configuration and improved accuracy of 97.403%

- V3 was trained with a standard construct configuration with a model score of 97.03%

Use a customer churn prediction model

turn on Show advanced metrics and review the objective metrics associated with each model version so that we can select the best performing model for registration in the model registry.

Based on performance metrics, we choose version 2 to register.

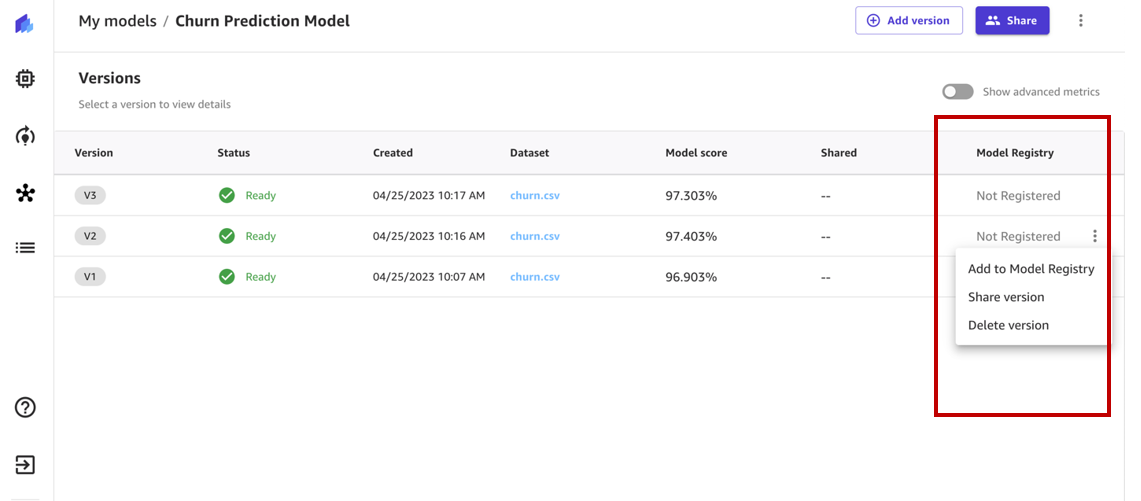

The model registry keeps track of all the model versions you build to solve a specific problem in a model group. When you create a Canvas model and register it in the model registry, it is added to the model group as a new model version.

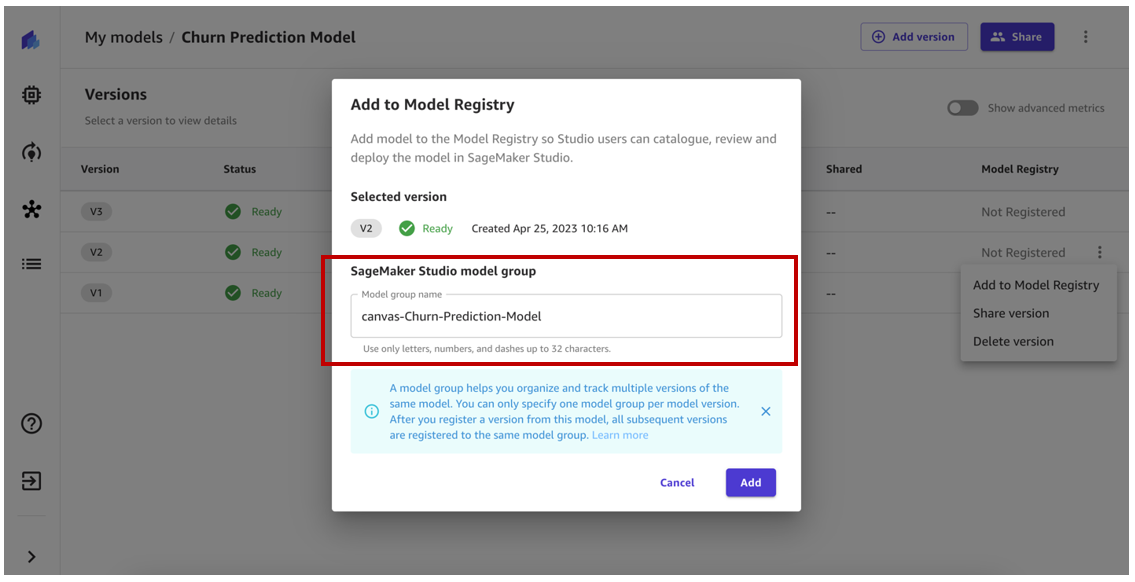

During registration, a model group is automatically created in the model registry. Optionally, you can rename to your choice or use a group of existing models in the model registry.

For this example, we use the auto-generated model group name and select addition.

Our model version should now be registered in the model group in the model registry. If we had another model version registered, it would be registered in the same model group.

The model version status should have been changed Not registered that registered.

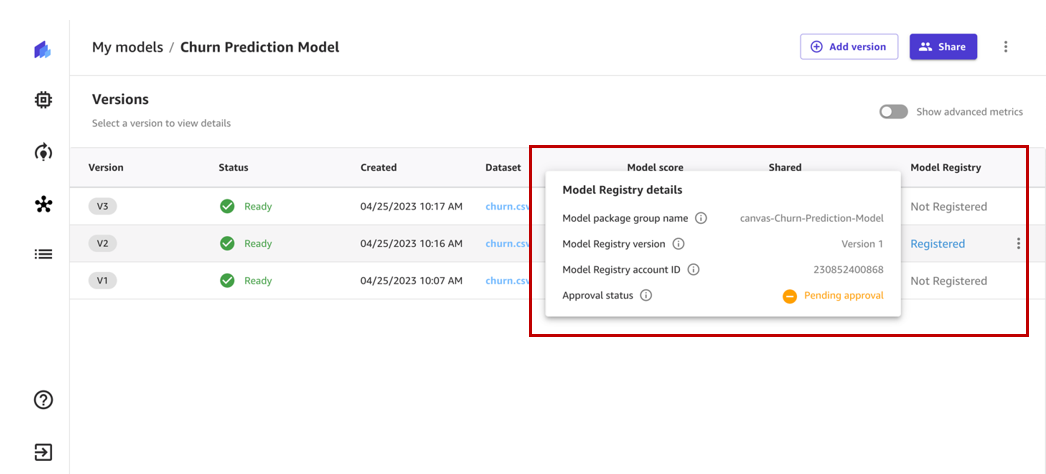

When we drill down to status, we can view model registry details, which include model group name, model registry account ID, and approval status. After registration, the status changes Awaiting approvalMeaning this model is registered in the model registry but is awaiting review and approval by a data scientist or MLOps team member and can only be deployed to an endpoint if approved.

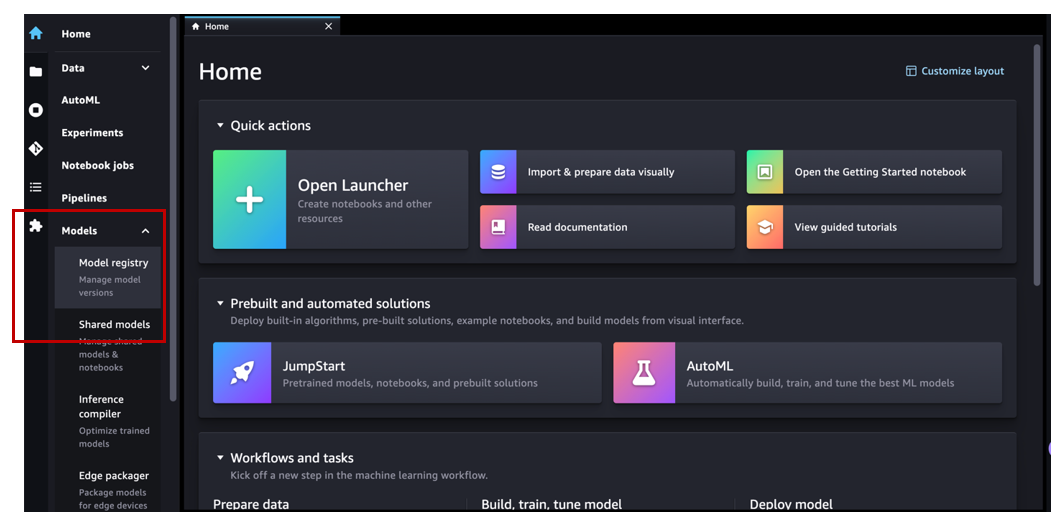

Now let’s switch to Amazon SageMaker Studio and assume the role of an MLOps team member. under models In the navigation pane, select Model registry To open the main page of the model registry.

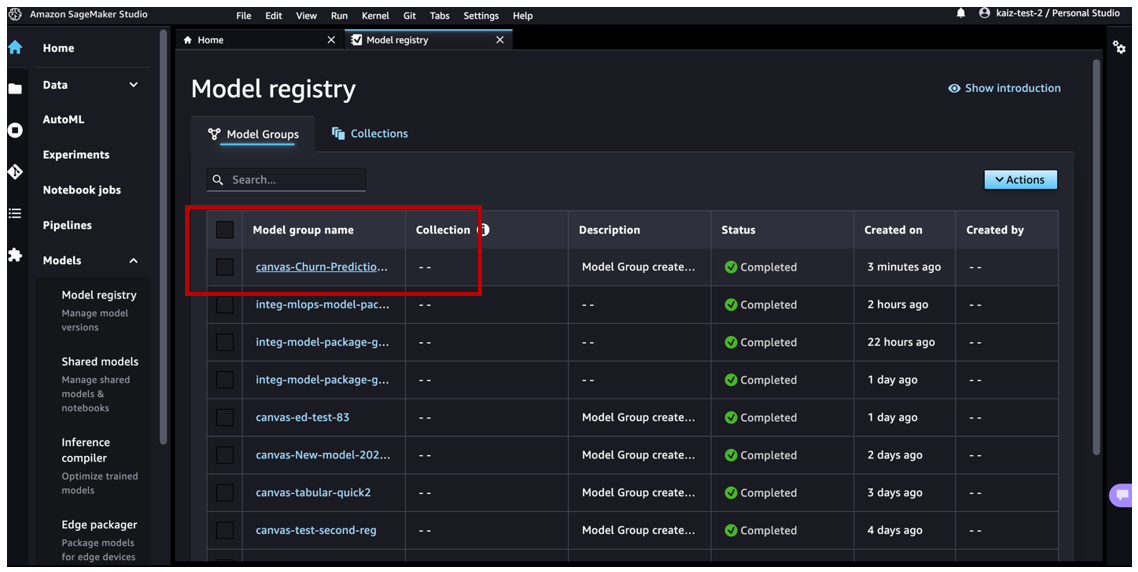

We see a group of modelsp canvas-Churn-Prediction-Model that the canvas was automatically created for us.

Select a model to view all versions registered in that model group, and then view the corresponding model details.

If you open the details for version 1, we see that activity tab stores all events that occur on the model.

Ზe Quality of the model On the tab, we can view model metrics, precision/recall curves, and confusion matrix plots to understand model performance.

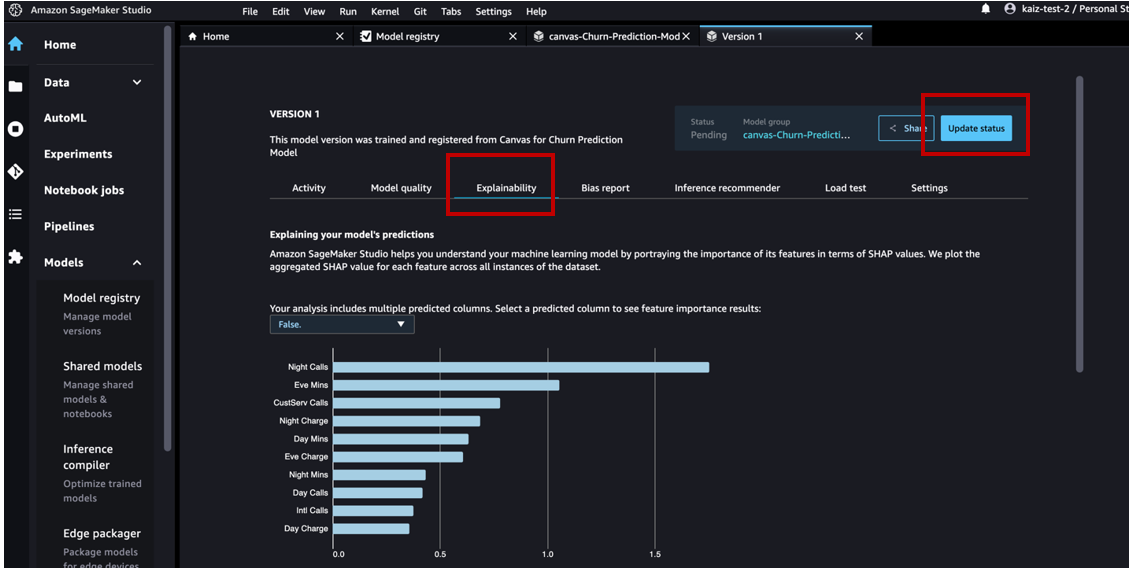

Ზe Explanation tab, we can look at the features that had the most impact on the model’s performance.

After reviewing the model artifacts, we can change the approval status wait for me that approved.

Now we can see the updated activity.

The Canvas business user will now be able to see that the status of the registered model has changed Awaiting approval that approved.

As a member of the MLOps team, since we have validated this ML model, let’s deploy it to the endpoint.

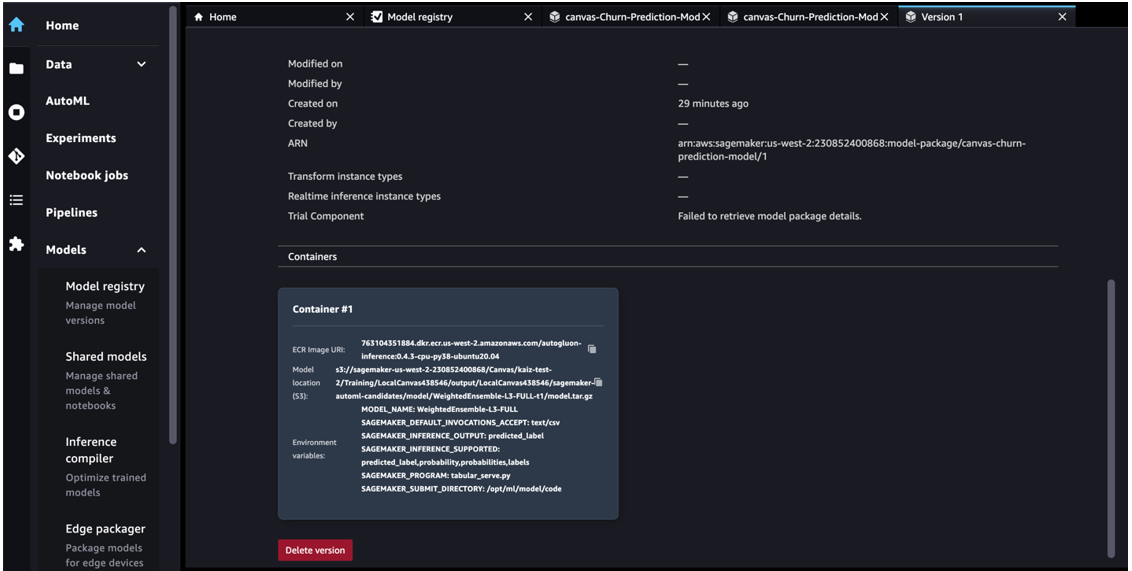

In Studio, go to the main Model Registry page and select canvas-Churn-Prediction-Model model group. Select the version to be deployed and proceed parameters tab.

Browse to get model package ARN details from the selected model version in the model registry.

Open a notebook in Studio and run the following code to apply it to the model endpoint. Replace the model package ARN with your own model package ARN.

After you create an endpoint, you can see it tracked as an event activity Model Registry tab.

You can double-click an endpoint name to get its details.

Now that we have an endpoint, let’s use it to get real-time inference. Change the name of your endpoint in the following code snippet:

Cleaning

To avoid future charges, please delete any resources you created after this post. This includes exiting Canvas and deleting the deployed SageMaker endpoint. Canvas bills you for the duration of your session, and we recommend that you log out of Canvas when you are not using it. For more information, see Logging Out of Amazon SageMaker Canvas.

conclusion

In this post, we discussed how Canvas can help ML models function in a production environment without the need for ML expertise. In our example, we showed how an analyst can quickly build a highly accurate predictive ML model without writing any code and register it in the model registry. The MLOps team can review it and either reject the model or approve the model and start the CI/CD deployment process.

To start your low-code / no-code ML journey, check out the Amazon SageMaker Canvas.

Special thanks to everyone who contributed to the launch:

Back end:

- Huayuan (Alice) Wu

- Kritafat Pugdeetosapol

- Yanda Hu

- John He

- Esha Dutta

- Prashant

front end:

About the authors

Janisha Anand is a Senior Product Manager on the SageMaker Low/No Code ML team, which includes SageMaker Autopilot. He likes coffee, is active and spends time with his family.

Janisha Anand is a Senior Product Manager on the SageMaker Low/No Code ML team, which includes SageMaker Autopilot. He likes coffee, is active and spends time with his family.

Kritafat Pugdeetosapol is a software development engineer at Amazon SageMaker and works primarily with low-code and no-code SageMaker products.

Kritafat Pugdeetosapol is a software development engineer at Amazon SageMaker and works primarily with low-code and no-code SageMaker products.

Huayuan (Alice) Wu is a software development engineer at Amazon SageMaker. It focuses on building user-friendly ML tools and products. Outside of work, she enjoys the outdoors, yoga and hiking.

Huayuan (Alice) Wu is a software development engineer at Amazon SageMaker. It focuses on building user-friendly ML tools and products. Outside of work, she enjoys the outdoors, yoga and hiking.

[ad_2]

Source link