[ad_1]

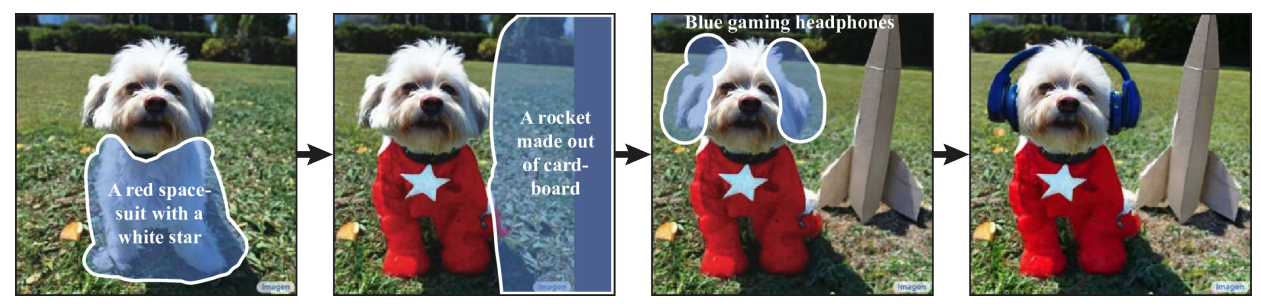

In the past few years, research on text-to-image generation has seen an explosion of advances (notably Imagen, Parti, DALL-E 2, etc.) that have naturally spread to related topics. In particular, text-guided image editing (TGIE) is a practical task that involves editing generated and captured visuals, rather than completely remaking them. Fast, automated, and controlled editing is a convenient solution when recreating visuals would be time-consuming or impractical (eg, tweaking objects in vacation photos or perfecting fine-grained details on a cute puppy from scratch). In addition, TGIE represents an important opportunity to improve the training of the fundamental models themselves. Multimodal models require a variety of data for proper training, and TGIE editing can provide high-quality and scalable synthetic data generation and recombination that, perhaps most importantly, provides methods for optimizing the distribution of training data on any given axis.

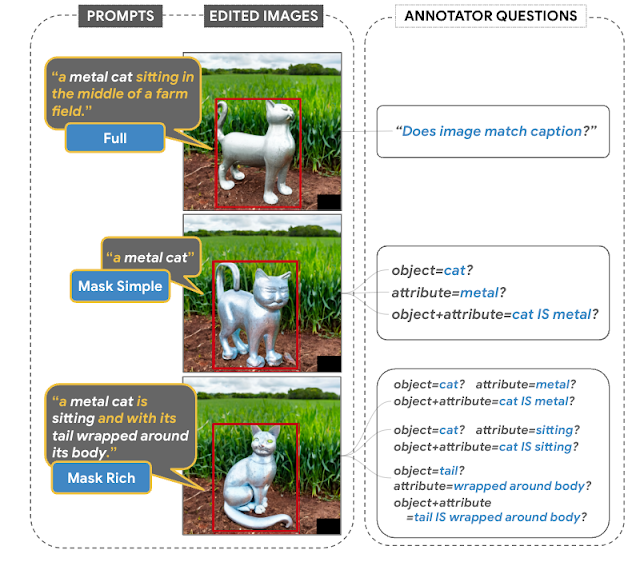

In “Imagen Editor and EditBench: Advancing and Evaluating Text Guided Image Inpainting,” to be presented at CVPR 2023, we present Imagen Editor, a state-of-the-art solution to the masked inpainting task—that is, when the user provides text instructions next to an overlay or “mask” ( usually generated in a drawing-type interface), indicating the area of the image to be modified. We also present the EditBench method, which evaluates the quality of image editing models. EditBench goes beyond the commonly used coarse-grained “this image matches this text” methods and learns different types of attributes, objects, and scenes for a finer-grained understanding of model performance. In particular, it emphasizes the fidelity of image text alignment without loss of image quality.

|

| Given an image, a user-defined mask, and a text prompt, Imagen Editor performs localized editing at designated locations. The model essentially incorporates user intent and performs photorealistic editing. |

Image editor

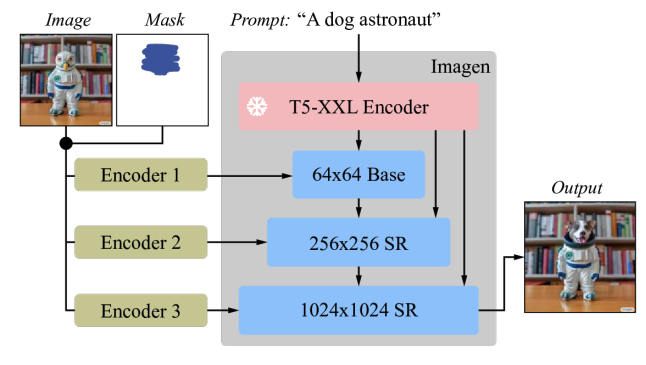

The Imagen Editor is a diffusion-based model that is well suited for Imagen editing. It aims for improved representation of language data, fine-grained control and high-fidelity results. The Imagen Editor takes three inputs from the user: 1) an image to edit, 2) a binary mask to specify the editing region, and 3) a text prompt – all three inputs drive the output patterns.

Imagen Editor depends on three basic techniques for high-quality text-guided image coloring. First, unlike previous coloring models (eg, palette, contextual attention, gated convolution) that use random box and stroke masks, Imagen Editor uses an object detector masking policy with an object detector module that produces object masks during training. Object masks are based on detected objects rather than random blobs and allow for more principled alignment between edit text requests and masked regions. Empirically, the method helps the model avoid the common problem of ignoring textual queries when the masked regions are small or only partially cover the object (eg, CogView2).

|

| random masks (left) often capture backgrounds or intersect object boundaries, defining regions that can be reliably painted only from the context of the image. object masks (right) is more difficult to color from image context alone, which encourages models to rely more on text input during training. |

Then, during training and inference, Imagen Editor enhances high-resolution editing at full resolution (1024×1024 in this work) by concatenating the input image and mask channels (similar to SR3, Palette, and GLIDE). For the base diffusion 64×64 model and the 64×64→256×256 super-resolution models, we use a parameterized downsampling convolution (eg, convolution with a step), which we empirically believe is critical for high fidelity.

|

| Imagen is well suited for image editing. All diffusion models, i.e. the baseline model and the super-resolution (SR) models, are conditioned on a high-resolution 1024×1024 image and mask input. For this purpose, new convolutional image codes have been introduced. |

Finally, in inference, we apply a classifier-free guideline (CFG) pattern bias to a specific conditioning, in this case, a text prompt. The CFG is interpolated between the text-conditioned and unconditional model predictions to provide robust alignment between the generated image and the input text for text-driven image coloring. We follow Imagen Video and use high guideline weights with guideline swing (a guideline schedule that fluctuates within the guideline weight range). In the base model (Stage-1 64x Diffusion), where it is most important to ensure strong alignment with the text, we use a guide weight schedule ranging from 1 to 30. Tradeoff between pattern fidelity and text-image alignment.

EditBench

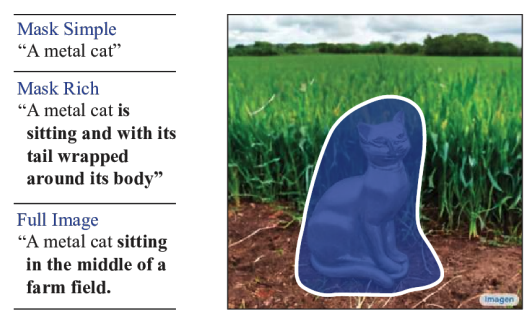

The EditBench dataset for evaluating text-driven image coloring contains 240 images, 120 generated and 120 native images. The generated images are synthesized by Parti and natural images are compiled from Visual Genome and Open Image datasets. EditBench captures a wide variety of languages, image types, and levels of fast text specificity (ie, simple, rich, and full captions). Each example consists of (1) a masked input image, (2) an input text prompt, and (3) a high-quality output image used to reference automated metrics. To provide access to the relative strengths and weaknesses of different models, the EditBench query is designed to examine fine-grained details across three categories: (1) attributes (eg, material, color, shape, size, quantity); (2) object types (eg, common, rare, text rendering); and (3) scenes (eg, indoor, outdoor, realistic, or paintings). To understand how different query specifications affect model performance, we offer three types of text queries: single-attribute (Mask Simple) or multi-attribute masked object description (Mask Rich) – or full image description (Full Image). Mask Rich, in particular, tests the ability of models to handle complex attribute binding and inclusion.

|

| The complete image is used as a reference for successful painting. The mask covers the target object in a free-form, unmarked form. We evaluate Mask Simple, Mask Rich, and Full Image requirements that correspond to conventional text image models. |

Due to the inherent weaknesses of the existing automated scoring metrics for TGIE (CLIPScore and CLIP-R-Precision), we use human scoring as the gold standard for EditBench. In the section below, we show how EditBench is used for model evaluation.

Rate

We evaluate the Imagen Editor model—with object coverage (IM) and random coverage (IM-RM)—against comparable models, steady diffusion (SD) and DALL-E 2 (DL2). Imagen Editor outperforms these models by significant margins in all EditBench evaluation categories.

To request a full image, Evaluation of a single image of a person Returns binary responses to confirm whether the image matches the caption. For Mask Simple queries, evaluation of a single human image verifies whether the object and attribute are correctly rendered and correctly bound (eg, for a red cat, a white cat on a red table would be an incorrect binding). Human assessment side by side Uses the Mask Rich requirements for a side-by-side comparison between IM-only and the other three models (IM-RM, DL2, and SD) and indicates which image matches the caption better for text image alignment and which image is the most realistic.

|

| Human assessment. The full image leads to an overall impression of the annotators on the text-image alignment; Mask Simple and Mask Rich check the mandatory inclusion of specific attributes, objects, and attributes. |

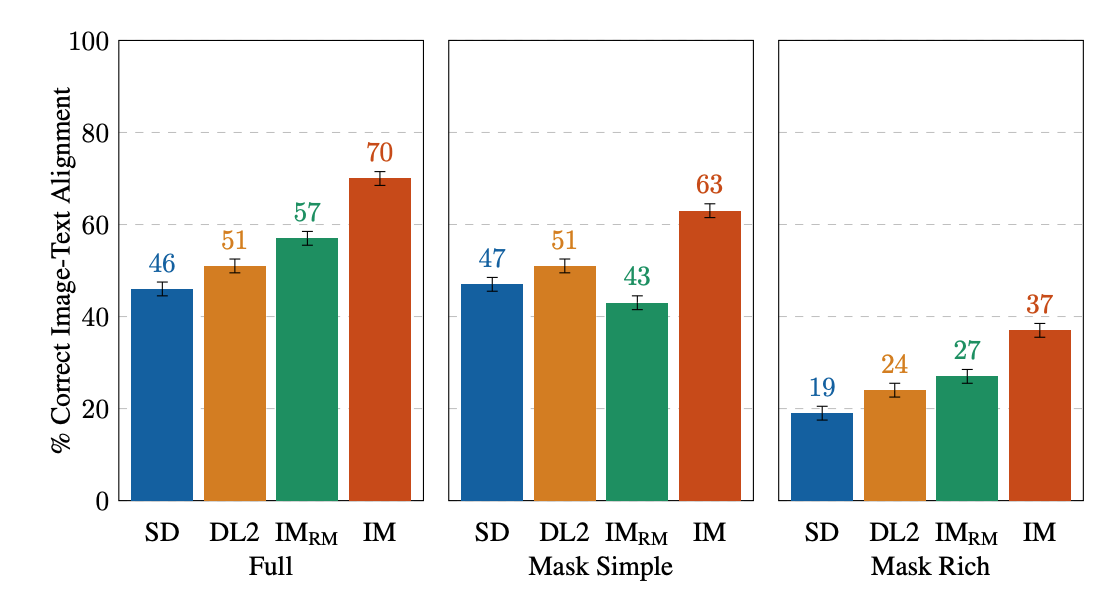

For single-image human estimation, IM scores the highest overall (10–13% higher than the 2nd best performing model). For the rest, the order of performance is IM-RM > DL2 > SD (3–6% difference) except for Mask Simple, where IM-RM lags behind by 4–8%. As relatively more semantic content is included in Full and Mask Rich, we hypothesize that IM-RM and IM benefit from higher quality T5 XXL text encoding.

|

| Human evaluations of text-driven image coloring on EditBench by fast type. For Mask Simple and Mask Rich queries, text-to-image alignment is correct if the edited image contains exactly all the attributes and objects specified in the query, including the correct attribute binding. Note that due to different assessment designs, full and mask-only requirements, the results are less directly comparable. |

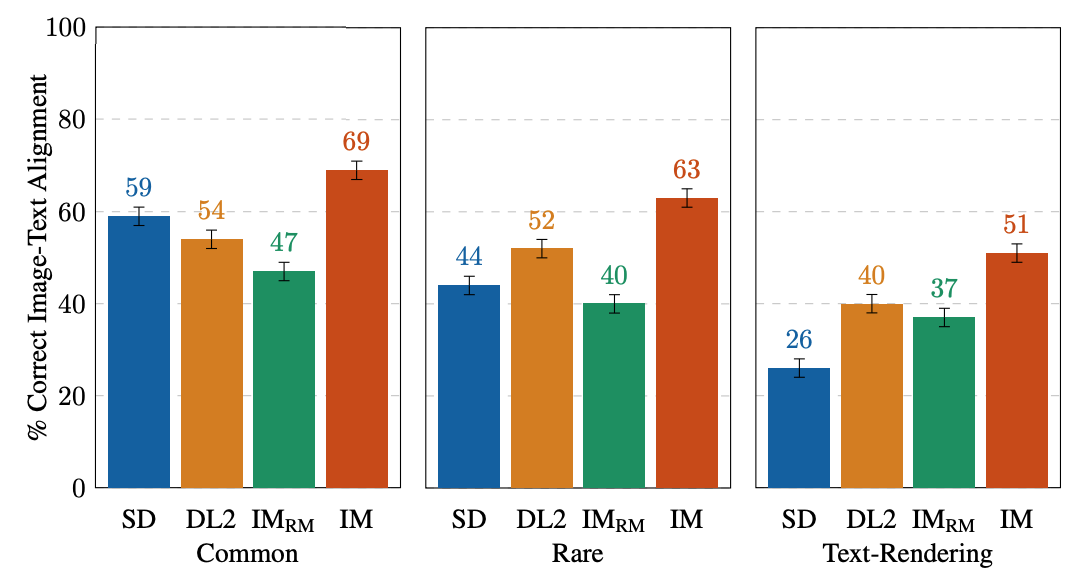

EditBench focuses on fine-grained annotation, so we evaluate models for object and attribute types. For object types, IM leads in all categories, 10–11% better than the second highest performing model in common, sparse, and text rendering.

|

| Evaluations of a single human image on EditBench Mask by simple object type. As a cohort, models are better at rendering objects than rendering text. |

For attribute types, IM is rated significantly higher (13–16%) than the 2nd best performing model, except for count, where DL2 is only 1% behind.

|

| Human evaluations on EditBench Mask Simple by attribute type. Object coverage improves the association of request attributes across the board (IM vs. IM-RM). |

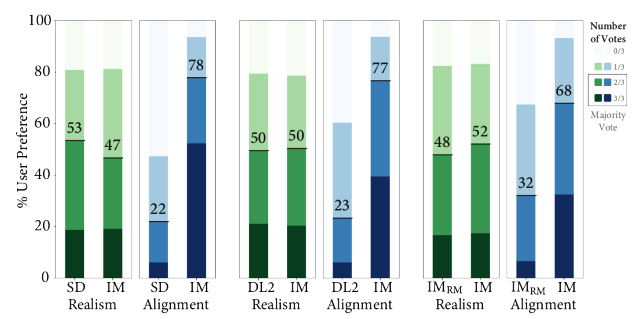

In a side-by-side versus one-to-one comparison of the other models, IM leads in text alignment by a significant margin, favoring annotators over SD, DL2, and IM-RM.

|

| Side-by-side human evaluation of image realism and text image alignment on EditBench Mask Rich requirements. For text-image alignment, Imagen Editor is preferred in all comparisons. |

Finally, we show a representative comparison for all models. See the paper for more examples.

|

| Example of model results Mask Simple vs. For Mask Rich queries. Object masking improves Imagen Editor’s fine-grained fit to the query compared to the same model trained with random masks. |

conclusion

We introduced Imagen Editor and EditBench, which made significant advances in text-guided image coloring and evaluation. Imagen Editor is a text-driven image editor that is well-customized from Imagen. EditBench is a comprehensive systematic benchmark for text-driven image coloring, evaluating performance across multiple dimensions: attributes, objects, and scenes. Note that due to concerns about responsible AI, we do not release Imagen Editor to the public. EditBench, on the other hand, is fully released for the benefit of the research community.

Acknowledgments

Thanks to Gunjan Baid, Nicole Brichtova, Sara Mahdavi, Kathy Meyer-Hellstern, Zarana Parekh, Anusha Ramesh, Tris Warkentin, Austin Waters, and Vijay Vasudevan for their generous support. We thank Igor Karpov, Isabelle Kraus-Liang, Raghava Ram Pamidigantam, Mahesh Madinala, and all anonymous annotators for coordination to complete the human evaluation tasks. We are grateful to Huiwen Chang, Austin Tarango, and Douglas Eck for feedback on the paper. Thanks to Erika Moreira and Victor Gomez for helping coordinate resources. Finally, thanks to the authors of DALL-E 2 for giving us permission to use the results of their model for research purposes.

[ad_2]

Source link